Save Time & Resources

Identify skills gaps faster and reduce manual admin with pre-built questions and automated training assignments.

Solutions

Solutions for

K-12

K-12

Learn MoreEducator & Staff Training

Educator & Staff Training

Improve compliance and deliver critical professional development with online courses and management system

Learn moreStudent Safety & Wellness Program

Student Safety & Wellness Program

Keep students safe and healthy with safety, well-being, and social and emotional learning courses and lessons

Learn moreProfessional Growth Management

Professional Growth Management

Integrated software to manage and track evaluations and professional development and deliver online training

Learn moreIncident & EHS Management

Incident & EHS Management

Streamline safety incident reporting and management to improve safety, reduce risk, and increase compliance

Learn moreCareer & Technical Education NEW

Career and Technical Education Solutions

Maximize student outcomes with an all-in-one work-based learning platform and courses on relevant skills and OSHA credentials.

Learn moreHigher Education

Higher Education

Learn MoreStudent Training

Student Training

Increase safety, well-being, and belonging with proven-effective training on critical prevention topics

Learn moreFaculty & Staff Training

Faculty & Staff Training

Create a safe, healthy, and welcoming campus environment and improve compliance with online training courses

Learn moreCampus Climate Surveys

Campus Climate Surveys

Simplify VAWA compliance with easy, scalable survey deployment, tracking, and reporting

Learn moreIncident & EHS Management

Incident & EHS Management

Streamline safety incident reporting and management to improve safety, reduce risk, and increase compliance

Learn moreProfessional Development Tracking

Professional Development Tracking

Efficiently plan, manage, track, and evaluate all professional development events and activities.

Learn moreFaculty & Staff Evaluations

Faculty & Staff Evaluations

Easily manage the evaluation and development process from start to finish.

Learn moreManufacturing

Manufacturing

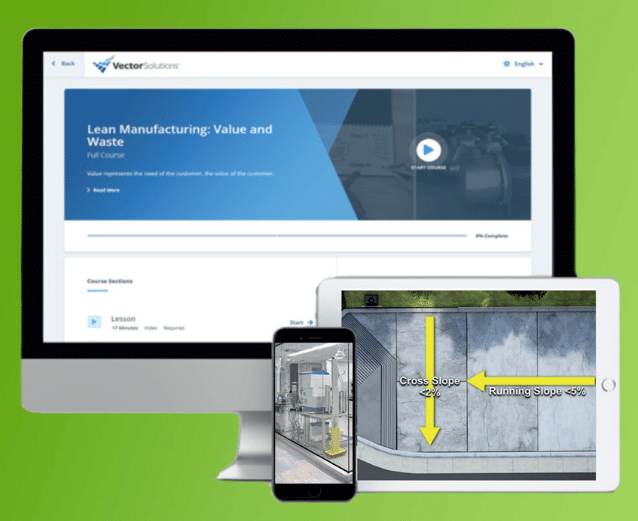

Learn MoreSafety Training

Safety Training

Elevate performance and productivity while reducing risk across your entire organization with online training.

Learn moreFood Safety Training

Food Safety Training

Empower your workforce with industry-leading training solutions designed for Food and Beverage Manufacturing.

Learn moreIndustrial Skills Training

Industrial Skills Training

Close skills gap, maximize production, and drive consistency with online training

Learn morePaper Manufacturing Training

Paper Manufacturing Training

Enhance worker expertise and problem-solving skills while ensuring optimal production efficiency.

Learn moreHR & Compliance

Provide role-specific knowledge, develop skills, and improve employee retention with career development training.

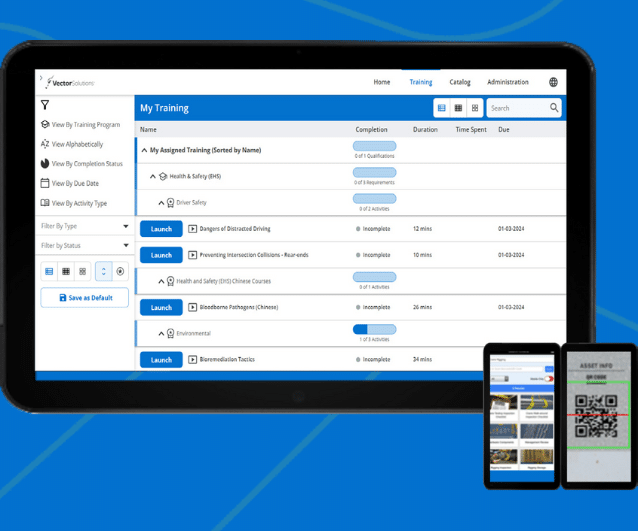

Learning Management System (LMS)

Learning Management System (LMS)

Assign, track, and report role-based skills and compliance training for the entire workforce

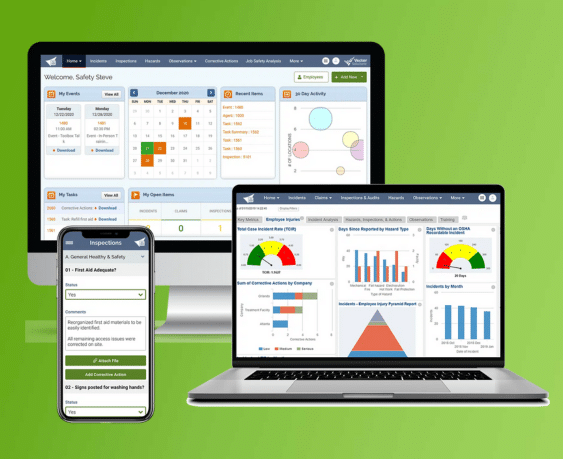

Learn moreEHS Management

EHS Management

Track, Analyze, Report Health and Safety Activities and Data for the Industrial Workforce

Learn moreFire Departments

Fire Departments

Learn MoreTraining Management

Training Management

A training management system tailored for the fire service—track all training, EMS recerts, skill evaluations, ISO, and more for 100% of training in one place.

Learn moreCrew Shift Scheduling

Crew Shift Scheduling

Simplify 24/7 staffing and give firefighters the convenience of accepting callbacks and shifts from a mobile device

Learn moreChecks & Inventory Management

Checks & Inventory Management

Streamline truck checks, PPE inspections, controlled substance tracking, and equipment maintenance with a convenient mobile app

Learn moreExposure and Critical Incident Monitoring

Exposure and Critical Incident Monitoring

Document exposures and critical incidents and protect your personnel’s mental and physical wellness.

Learn moreEMS

EMS

Learn MoreTraining Management and Recertification

Training Management and Recertification

A training management system tailored for EMS services—EMS online courses for recerts, mobile-enabled skill evaluations, and more for 100% of training in one place.

Learn moreEMS Shift Scheduling

EMS Shift Scheduling

Simplify 24/7 staffing and give medics the convenience of managing their schedules from a mobile device

Learn moreInventory Management

Inventory Management

Streamline vehicle checks, controlled substance tracking, and equipment maintenance with a convenient mobile app

Learn moreWellness Monitoring & Exposure Tracking

Wellness Monitoring & Exposure Tracking

Document exposures and critical incidents and protect your personnels’ mental and physical wellness

Learn moreLaw Enforcement

Law Enforcement

Learn MoreTraining and FTO Management

Training and FTO Management

Increase performance, reduce risk, and ensure compliance with a training management system tailored for your FTO/PTO and in-service training for 100% of training in one place.

Learn moreProfessional Standards and Early Intervention

Professional Standards and Early Intervention

Equip leaders with a tool for performance management and early intervention that helps build positive agency culture

Learn moreOfficer Shift Scheduling

Officer Shift Scheduling

Simplify 24/7 staffing and give officers the convenience of managing their schedules from a mobile device

Learn moreAsset Management & Inspections

Asset Mangagement & Inspections

Streamline equipment checks and vehicle maintenance to ensure everything is working correctly and serviced regularly

Learn morePolicy Management NEW

Policy Management

Manage, distribute, and track all agency policies and memos with a purpose-built solution for law enforcement

Learn morePerformance Management NEW

Performance Management

Modernize and improve any performance review process to ensure consistent, high-quality evaluations for every officer

Learn moreBWC Audits NEW

BWC Audits

Enhance accountability with pre-loaded BWC and MVR evaluation forms for consistent video review and evaluation

Learn moreCommunity Policing NEW

Community Policing

Enhance citizen transparency, trust, and satisfaction while also reducing call volumes and administrative burdens

Learn moreAccreditation Management NEW

Accreditation Management

Streamline and simplify accreditation management for local departments and state accreditation bodies.

Learn moreEnergy

Learn MoreSafety Training

Safety Training

Elevate performance and productivity while reducing risk across your entire organization with online training.

Learn moreEnergy Skills Training NEW

Energy Skills Training

Empower your team with skills and safety training to ensure compliance and continuous advancement.

Learn moreHR & Compliance

Provide role-specific knowledge, develop skills, and improve employee retention with career development training.

Learning Management System (LMS)

Learning Management System (LMS)

Assign, track, and report role-based skills and compliance training for the entire workforce

Learn moreEHS Management

EHS Management

Track, analyze, report health and safety activities and data for the industrial workforce

Learn moreGovernment

Learn MoreFederal Training Management

Federal Training Management

Lower training costs and increase readiness with a unified system designed for high-risk, complex training and compliance operations.

Learn moreMilitary Training Management

Military Training Management

Increase mission-readiness and operational efficiency with a unified system that optimizes military training and certification operations.

Learn moreLocal Government Training Management

Local Government Training Management

Technology to train, prepare, and retain your people

Learn moreFire Marshal Training & Compliance

Fire Marshal Training & Compliance

Improve fire service certification and renewal operations to ensure compliance and a get a comprehensive single source of truth.

Learn moreFire Academy Automation

Fire Academy Automation

Elevate fire academy training with automation software, enhancing efficiency and compliance.

Learn morePOST Training & Compliance

POST Training & Compliance

Streamline your training and standards operations to ensure compliance and put an end to siloed data.

Learn moreLaw Enforcement Academy Automation

Law Enforcement Academy Automation

Modernize law enforcement training with automation software that optimizing processes and centralizes academy information in one system.

Learn moreEHS Management

EHS Management

Simplify incident reporting to OSHA and reduce risk with detailed investigation management.

Learn moreArchitecture, Engineering & Construction

Architecture, Engineering & Construction

Learn MoreLearning Management System (LMS)

Learning Management System (LMS)

Ensure licensed professionals receive compliance and CE training via online courses and learning management.

Learn moreOnline Continuing Education

Online Continuing Education

Keep AEC staff licensed in all 50 states for 100+ certifications with online training

Learn moreTraining

Training

Drive organizational success with training that grows skills and aligns with the latest codes and standards

Learn moreEHS Management

EHS Management

Track, Analyze, Report Health and Safety Activities and Data for AEC Worksites

Learn moreHR & Compliance

HR & Compliance

Provide role-specific knowledge, develop skills, and improve employee retention with career development training.

Casinos & Gaming

Casinos & Gaming

Learn MoreAnti-Money Laundering Training

Anti-Money Laundering Training

Reduce risk in casino operations with Title 31 and Anti-Money Laundering training compliance

Learn moreEmployee Training

Employee Training

Deliver our leading AML and casino-specific online courses to stay compliant with national and state standards

Learn moreLearning Management System (LMS)

Learning Management System (LMS)

Streamline training operations, increase employee effectiveness, and reduce liability with our LMS for casinos

Learn moreEHS Management

EHS Management

Simplify incident reporting to OSHA and reduce risk with detailed investigation management

Learn moreEmployee Scheduling

Employee Scheduling

Equip your employees with a mobile app to manage their schedules and simplify your 24/7 staff scheduling

Learn moreArdentSky Compliance Suite NEW

ArdentSky Compliance Suite

Integrated solution purpose-built for modern regulatory demands. ArdentSky, delivers automated, integrated compliance solutions trusted by global gaming manufacturers, operators, and suppliers.

Learn moreIndustries

Resources

Company

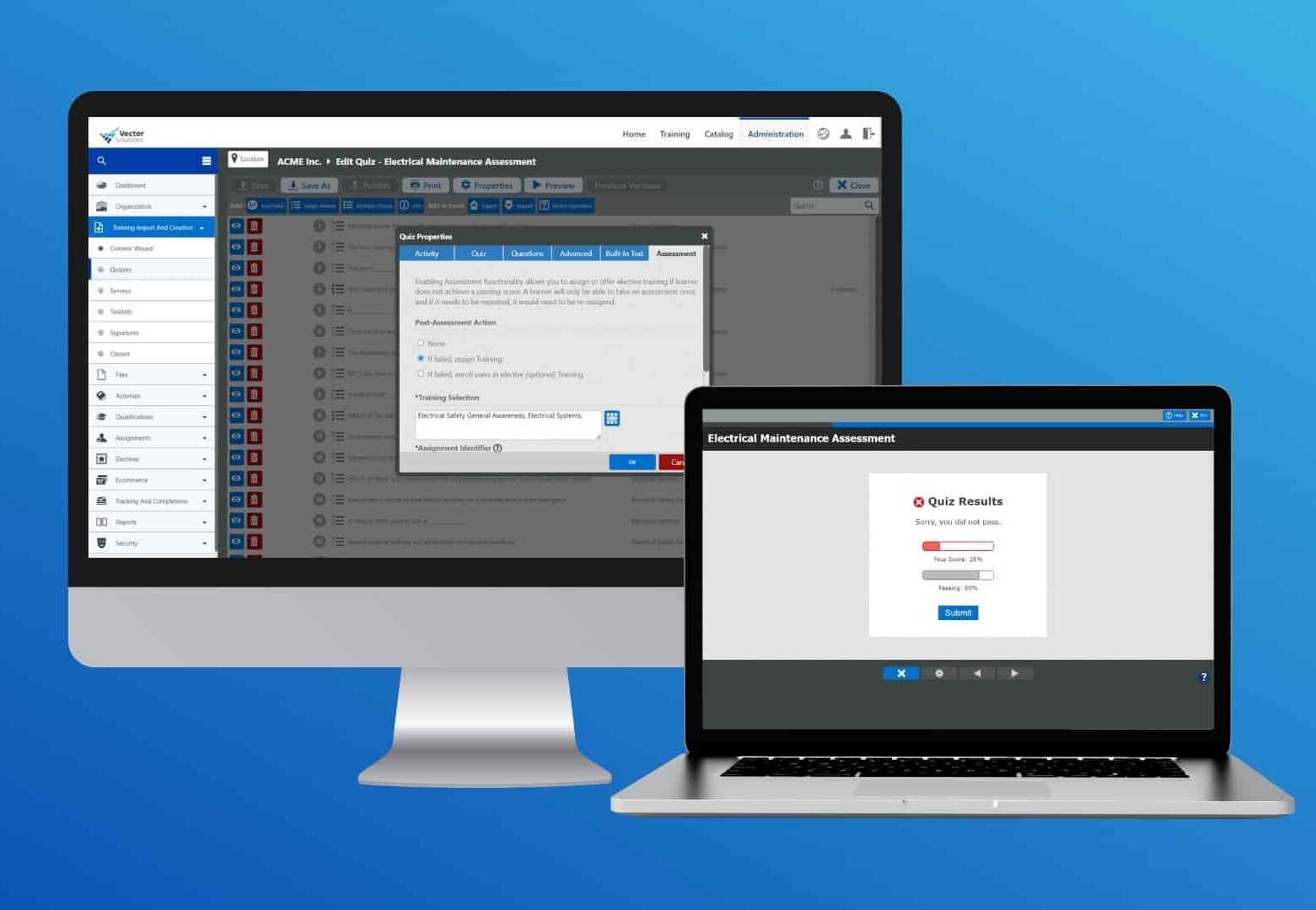

Course Center

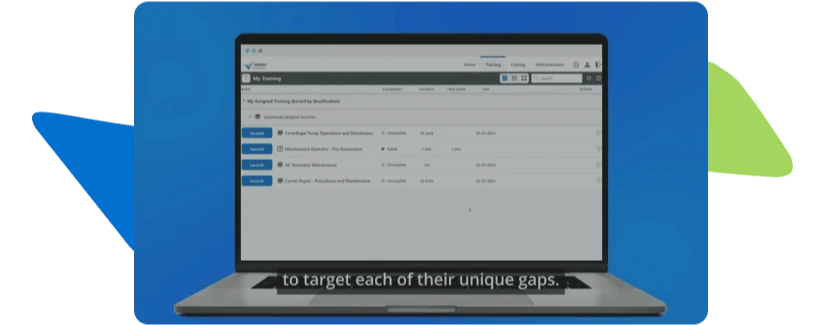

Identify skills gaps earlier, automate follow-up training, and verify workforce readiness and compliance with Vector Solutions’ Competency Assessment tool. Pre-assess knowledge, reinforce critical learning, and help employees build the skills they need to work safely and effectively.

Built into Vector’s LMS, Competency Assessment helps you identify skills gaps, improve onboarding, and automate targeted training based on each employee’s results. The outcome is less manual work, more consistent development, and stronger workforce readiness.

Identify skills gaps faster and reduce manual admin with pre-built questions and automated training assignments.

Create assessments and training paths aligned to roles, risks, and operational requirements.

Use Competency Assessments and training to strengthen onboarding, reinforce critical knowledge, and support employee growth.

Create and manage assessments, identify knowledge gaps, and automatically assign follow-up training based on results. Competency Assessment helps turn evaluation into action without adding manual steps.

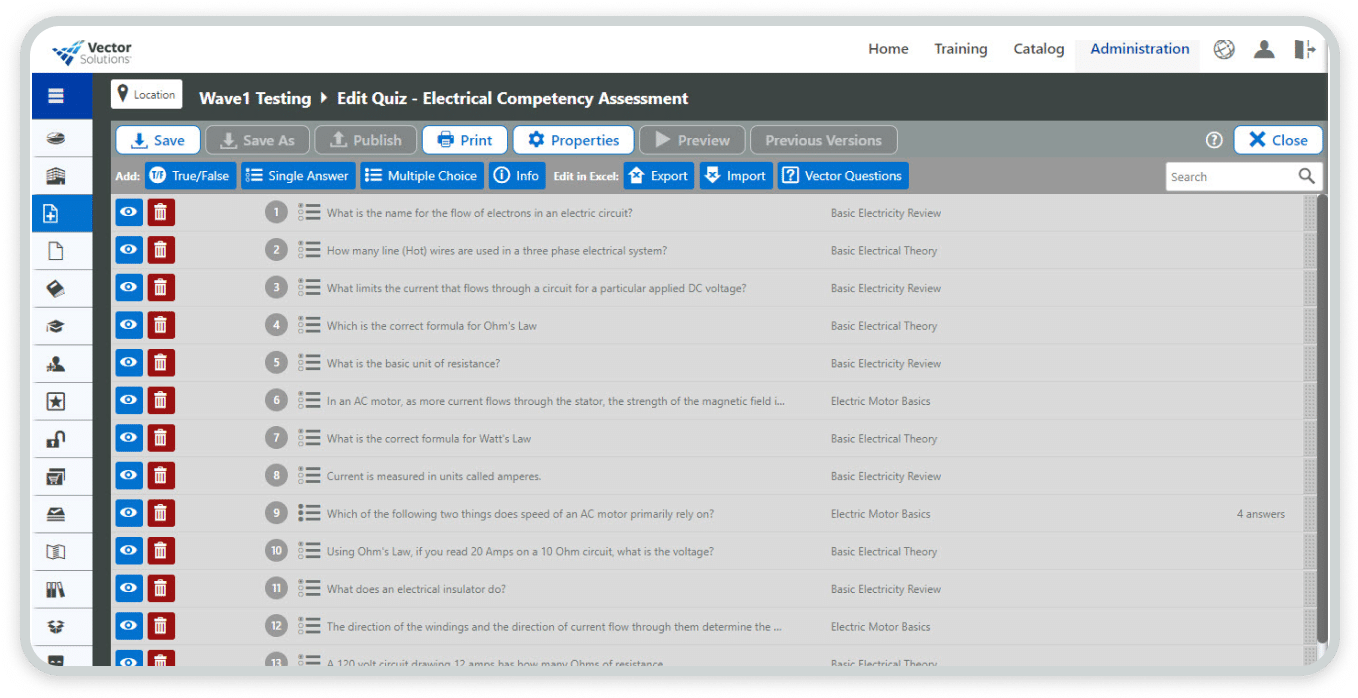

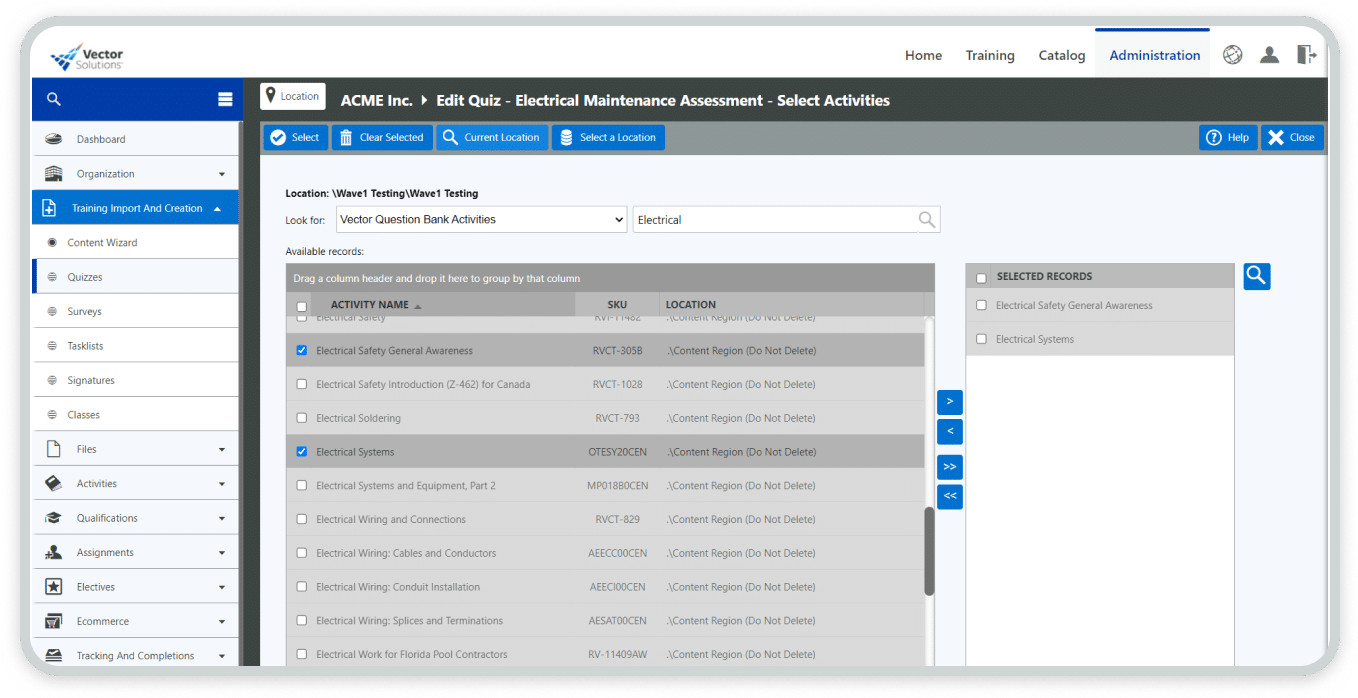

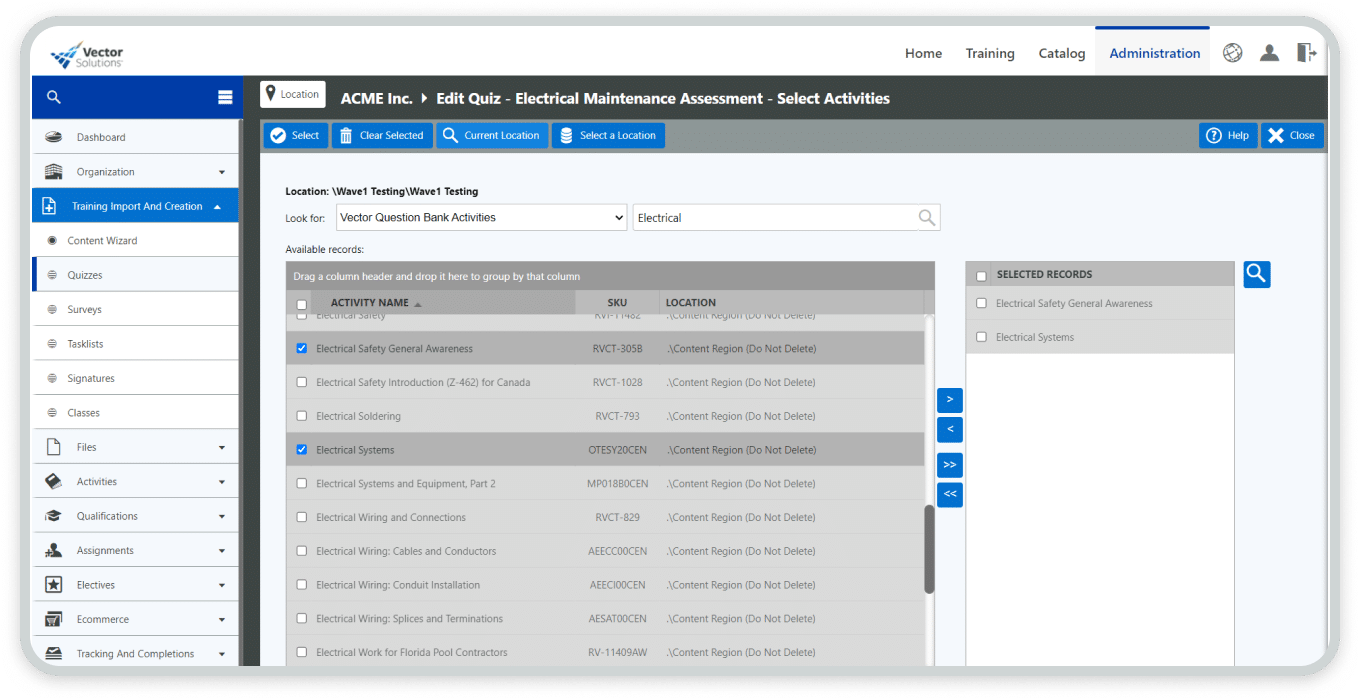

Build assessments that reflect real job requirements using Vector course questions or your own content, so you can evaluate competency more consistently and accurately.

Speed up assessment creation with Vector-built questions tied to relevant courses, helping you save time while delivering more consistent evaluations.

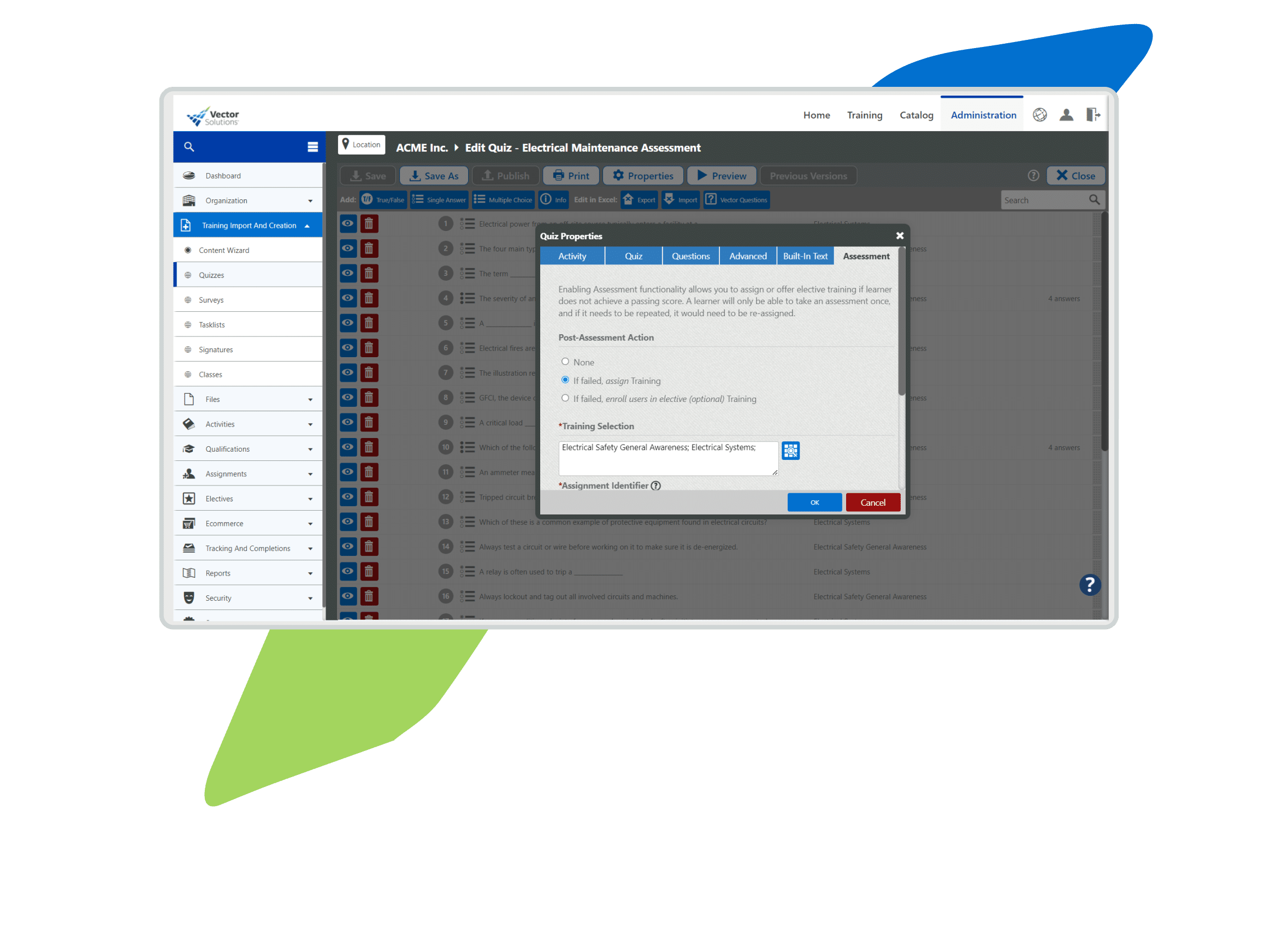

Automatically assign required or optional training based on assessment performance, so employees get the right follow-up without manual intervention.

Reporting allows you to monitor results, identify persistent gaps, and build smarter training plans that improve readiness over time.

Build targeted assessment and training workflows that close skills gaps, reinforce critical knowledge, and help employees perform their jobs more safely, consistently, and effectively.

Competency Assessments save you time creating relevant quizzes, assigning follow-up training, and reporting on results.

Automatically connect failed assessments to the right training so learning gaps are addressed quickly.

Support employee development with more relevant training paths that build confidence and strengthen retention.

Competency Assessments verifies that employees understand critical information before work begins and after training is completed.

“Once we transitioned over to Vector, two and a half days' worth of work per week was freed up. You can assess people where they are and if there is training needed and automatically assign it and recommend courses for them to take. Which is a game changer.”

LaRuthie Mason

Corporate Manager of Technical Education

Read the customer story

“The Vector LMS has the full solution we need - the training, the safety, the tracking, the engagement, the support, the cost…all of those things are right there. I dare other organizations to do what Vector Solutions has done."

Director of Training and Development

Read the customer story"I'm very optimistic, very excited about the direction we're going with training. I think we're still just at the tip of the iceberg with what we're going to be able to do now that we have Vector Solutions."

Instructional Designer & Training Facilitator

Read the customer story

Improve performance and ensure compliance with industry-specific learning management needs.

Learn more

Protect teams with our leading risk intelligence and safety communications platform.

Learn more

Store, organize, and access safety data sheets (SDS) and chemical inventory online.

Learn more

June 24, 2024 5 min read

March 5, 2024 6 min read

April 1, 2024 6 min read

December 20, 2023 min read

April 30, 2024

July 30, 2024

September 21, 2023

December 1, 2022

October 24, 2023

June 2, 2022

September 22, 2021

September 16, 2021